|

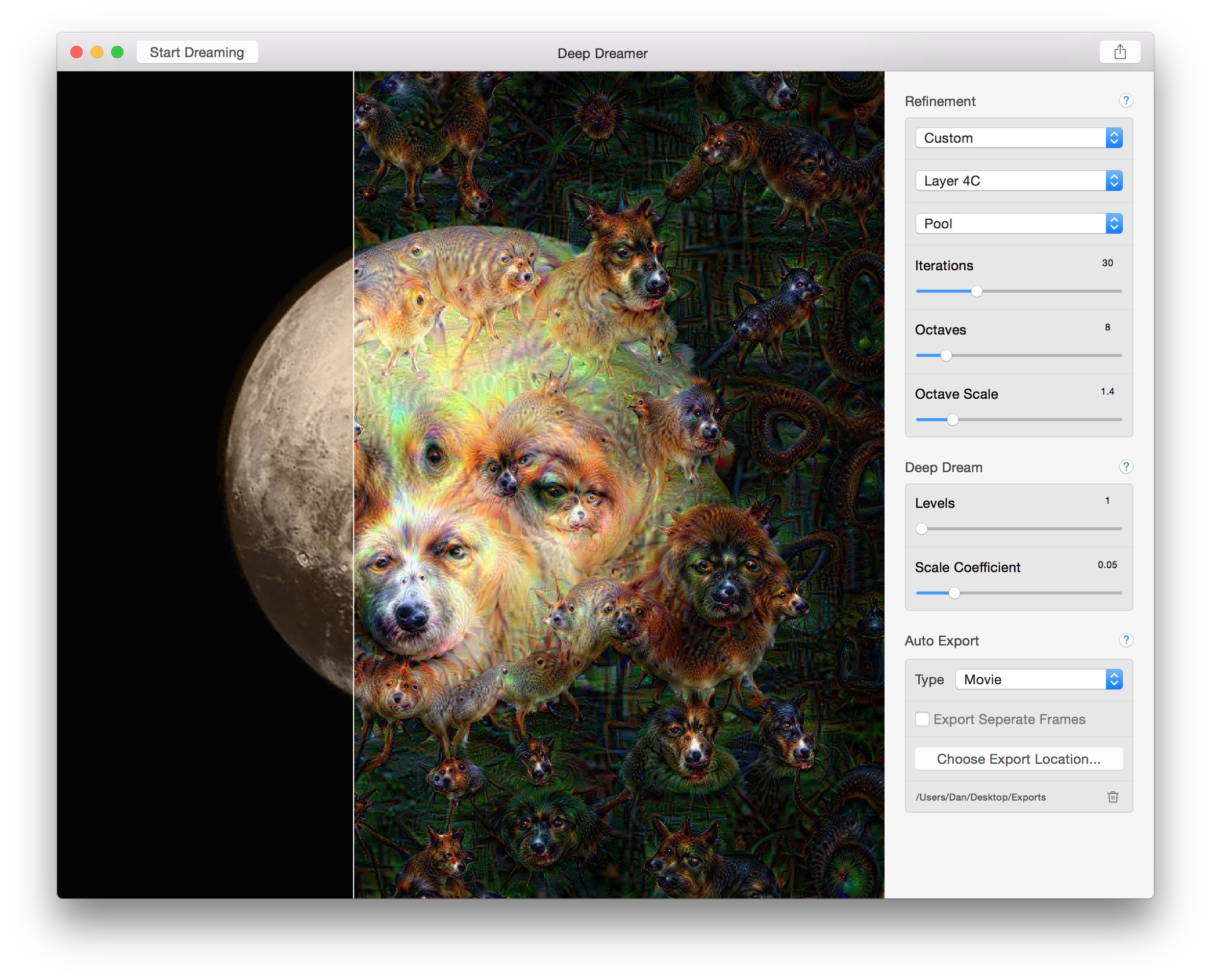

At each step, you will have created an image that increasingly excites the activations of certain layers in the network. Once you have calculated the loss for the chosen layers, all that is left is to calculate the gradients with respect to the image, and add them to the original image.Īdding the gradients to the image enhances the patterns seen by the network. # Converts the image into a batch of size 1. # Pass forward the image through the model to retrieve the activations. In DeepDream, you will maximize this loss via gradient ascent. Normally, loss is a quantity you wish to minimize via gradient descent. The loss is normalized at each layer so the contribution from larger layers does not outweigh smaller layers. The loss is the sum of the activations in the chosen layers. Layers = ĭream_model = tf.keras.Model(inputs=base_model.input, outputs=layers) # Maximize the activations of these layers Feel free to experiment with the layers selected below, but keep in mind that deeper layers (those with a higher index) will take longer to train on since the gradient computation is deeper. Deeper layers respond to higher-level features (such as eyes and faces), while earlier layers respond to simpler features (such as edges, shapes, and textures). Using different layers will result in different dream-like images. There are 11 of these layers in InceptionV3, named 'mixed0' though 'mixed10'.

For DeepDream, the layers of interest are those where the convolutions are concatenated. The InceptionV3 architecture is quite large (for a graph of the model architecture see TensorFlow's research repo). The complexity of the features incorporated depends on layers chosen by you, i.e, lower layers produce strokes or simple patterns, while deeper layers give sophisticated features in images, or even whole objects. The idea in DeepDream is to choose a layer (or layers) and maximize the "loss" in a way that the image increasingly "excites" the layers. Note that any pre-trained model will work, although you will have to adjust the layer names below if you change this. You will use InceptionV3 which is similar to the model originally used in DeepDream. Original_img = download(url, max_dim=500)ĭisplay.display(display.HTML('Image cc-by: Von.grzanka'))Ĩ3281/83281 - 0s 0us/stepĭownload and prepare a pre-trained image classification model. # Downsizing the image makes it easier to work with. Image_path = tf._file(name, origin=url)ĭisplay.display((np.array(img))) # Download an image and read it into a NumPy array. If you would like to use Nvidia GPU with TensorRT, please make sure the missing libraries mentioned above are installed properly.įor this tutorial, let's use an image of a labrador. 04:27:48.364769: W tensorflow/compiler/tf2tensorrt/utils/py_:38] TF-TRT Warning: Cannot dlopen some TensorRT libraries. 04:27:48.364759: W tensorflow/compiler/xla/stream_executor/platform/default/dso_:64] Could not load dynamic library 'libnvinfer_plugin.so.7' dlerror: libnvinfer_plugin.so.7: cannot open shared object file: No such file or directory 04:27:48.364665: W tensorflow/compiler/xla/stream_executor/platform/default/dso_:64] Could not load dynamic library 'libnvinfer.so.7' dlerror: libnvinfer.so.7: cannot open shared object file: No such file or directory Let's demonstrate how you can make a neural network "dream" and enhance the surreal patterns it sees in an image.

This process was dubbed "Inceptionism" (a reference to InceptionNet, and the movie Inception). The image is then modified to increase these activations, enhancing the patterns seen by the network, and resulting in a dream-like image. It does so by forwarding an image through the network, then calculating the gradient of the image with respect to the activations of a particular layer. Similar to when a child watches clouds and tries to interpret random shapes, DeepDream over-interprets and enhances the patterns it sees in an image.

This tutorial contains a minimal implementation of DeepDream, as described in this blog post by Alexander Mordvintsev.ĭeepDream is an experiment that visualizes the patterns learned by a neural network.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed